We interviewed 17 research industry leaders to identify how they are successfully leveraging AI today.

This report summarises the strategies and best practices these leaders are taking into 2026. We thank those interviewed for their candidness and thoughtfulness (and those of you who had to remain anonymous, you know who you are!)

Judd Antin Consultant and Academic

Dan Busso Staff UX Researcher Meta

Lindsey DeWitt Prat Director Bold Insight

Jess Holbrook Head of UX Research Microsoft AI

Soojin Jeong AIUX Research Lead Google DeepMind

Katie Johnson Global Head of Research Panasonic Well

Sohiit Karol Senior Staff UX Research Manager Google

Sam Ladner Principle Researcher

Lotta McAlpine Director of Product Insights Spotify

Emanuel Moss Senior Research Scientist Intel Labs

Heli Rantavuo Head of Customer Insight OP Financial Group

- Other industry leaders Global Insights Lead, CPG Head of Insight, CPG UX Research Director, Finance Research VP, Fintech Member AI Research Council, Telecoms Senior Staff UX Research, Wearable Tech

“If you're not using and embracing AI, this company is not right for you. That doesn't mean you have to transform everything you do. My advice is to stay curious and lean in.” – Lotta McAlpine, Director of Product Insights, Spotify

Synthetic Users, NotebookLM, Deep Research, AI-Moderated interviews. Three years after the launch of ChatGPT, AI is no longer the theoretical future for research. It’s here today.

Research leaders are at a crossroads. They must consider what these new capabilities mean for their team and operating model, and make decisions that could define the role of research in their organisation for years to come. Many are under intense pressure to develop a strategy quickly, often in the service of efficiency, or because non-research teams are adopting these tools anyway. The proliferation of "research slop" underscores the urgency to act now.

The stakes are high. Get the approach wrong and research could lose credibility and relevance. Get it right, and AI can strengthen research’s role as a centre of competitive advantage.

We interviewed 17 industry leaders, most of whom head up large teams of researchers within major technology-led organisations.

We wanted to get beneath the surface to understand the emerging evidence from first-hand experience today. Which AI investments and experiments were working? What sounded good on paper but was failing to add value in practice? What is the right approach based on their experience to date?

Across the conversations we identified four strategies and 12 tactics that leaders were following successfully to maximise the value of AI.

A matrix illustrating the playbook’s four strategies of success. The four quadrants are: 01 Automate narrow tasks (top left), 02 Establish quality guardrails (top right), 03 Invest in the edge (bottom right), 04 Distribute established knowledge (bottom left).

“As a research function, we set ambiguous goals over long time frames. So at a global level research is a Reinforcement Learning nightmare! But I think at the local level you can [automate]” – Jess Holbrook, Head of UX Research for Microsoft AI

Across teams, leaders pointed to a rising set of personal, tightly scoped tasks where AI simply works. Dan Busso (Meta) uses internal tools to turn dense documents into “clear, actionable bullet points”, emphasising that the benefit is speed, not capability. A Research Director in Financial Services does the same for slide decks and for smoothing internal communication, using AI “wordsmithing” to move faster through revisions.

Within technical flows, automation can reduce friction. Katie Johnson (Panasonic Well) described “speaking to my sheets instead of using macro code”, asking AI to “create a table that helps me understand the relationship” between data points. What once required knowledge of complex formulas now happens through a conversation.

Leaders are also turning to AI for quick knowledge checks. Judd Antin (Consultant and Academic) now uses AI for 50–70% of what he used to search—fast fact-finding, rapid topic scanning and clearing cognitive clutter. Across all of these cases, the pattern is clear: AI is becoming a frictionless first pass. It handles the routine tasks that used to interrupt momentum so researchers can focus on the interpretive, strategic, and relational work where human judgement matters most.

Automation is already delivering real value in the parts of the workflow that are structured, repeatable, and time-intensive. A member of an AI Advisory Council governing the research function of a Global Telecom, pointed this out. They noted that AI shines at “repetitive kinds of tasks that humans are not so good at”, like processing hundreds of verbatim responses or surfacing early thematic clusters.

Lotta (Spotify) saw similar gains at the front end of research for work that follows a familiar blueprint. Personalising templates. Suggesting the structure of a discussion guide. Setting up projects. Summarising topline observations. AI speeds up these tasks because they’re predictable. The pattern here is consistent: automation works best in the “sweet spot” of projects that are “not too complex nor too deep”, and always under the “close collaboration of a researcher”. When humans lead and AI assists, the workflow accelerates.

Research leads are using AI to adopt the vantage points of different audiences, especially in highly specialised, cross-functional or multilingual environments. This is invaluable in challenging priors and improving the quality and relevance of the work. Dan Busso (Meta) described using prompts to reshape and prioritise insights for specific stakeholder groups, noting, “I use prompts to tailor [my communication] to frame and prioritise my insights for a specific set of stakeholders”.

For those communicating in a second or third language, this support is even more transformative. As Soojin Jeong (Google DeepMind) explained, “sometimes I ask AI to imagine you’re an engineer having a discussion with a researcher…especially when I’m speaking to senior level executives”. Far from diminishing human communication, LLMs are helping researchers speak the language of their collaborators, easing linguistic barriers and deepening their effectiveness across the organisation.

A mock-up image of NotebookLM interface. A feature titled “Customise Audio Overview,” in which the LLM asks what the AI host should focus on in a generated podcast-style overview. In this example, the user enters the request, “Share the report summary from an engineer’s perspective.”

Efficiency gains mask significant risks when automation is deployed without rigorous quality checks. Leaders warned that the speed and fluency of AI can create a false sense of reliability, especially in parts of the workflow where early-stage errors cascade downstream.

Automatic speech recognition emerged as a particularly fragile link. Emanuel Moss (Intel Labs) described it as a potentially “dangerous tool” because domain-specific terms are routinely “glossed” into more common corpus expressions—“ChatGPT” turning into “Chad Djibouti”, for example. These mistakes are rarely obvious, and yet they shape the primary data on the basis of which decisions are made.

The risk is not merely inaccurate transcripts; it is the downstream distortion that follows. When teams assume transcription outputs are accurate by default, errors become embedded in codes, themes, summaries and ultimately, decisions. What looks like efficiency can quietly erode analytical integrity.

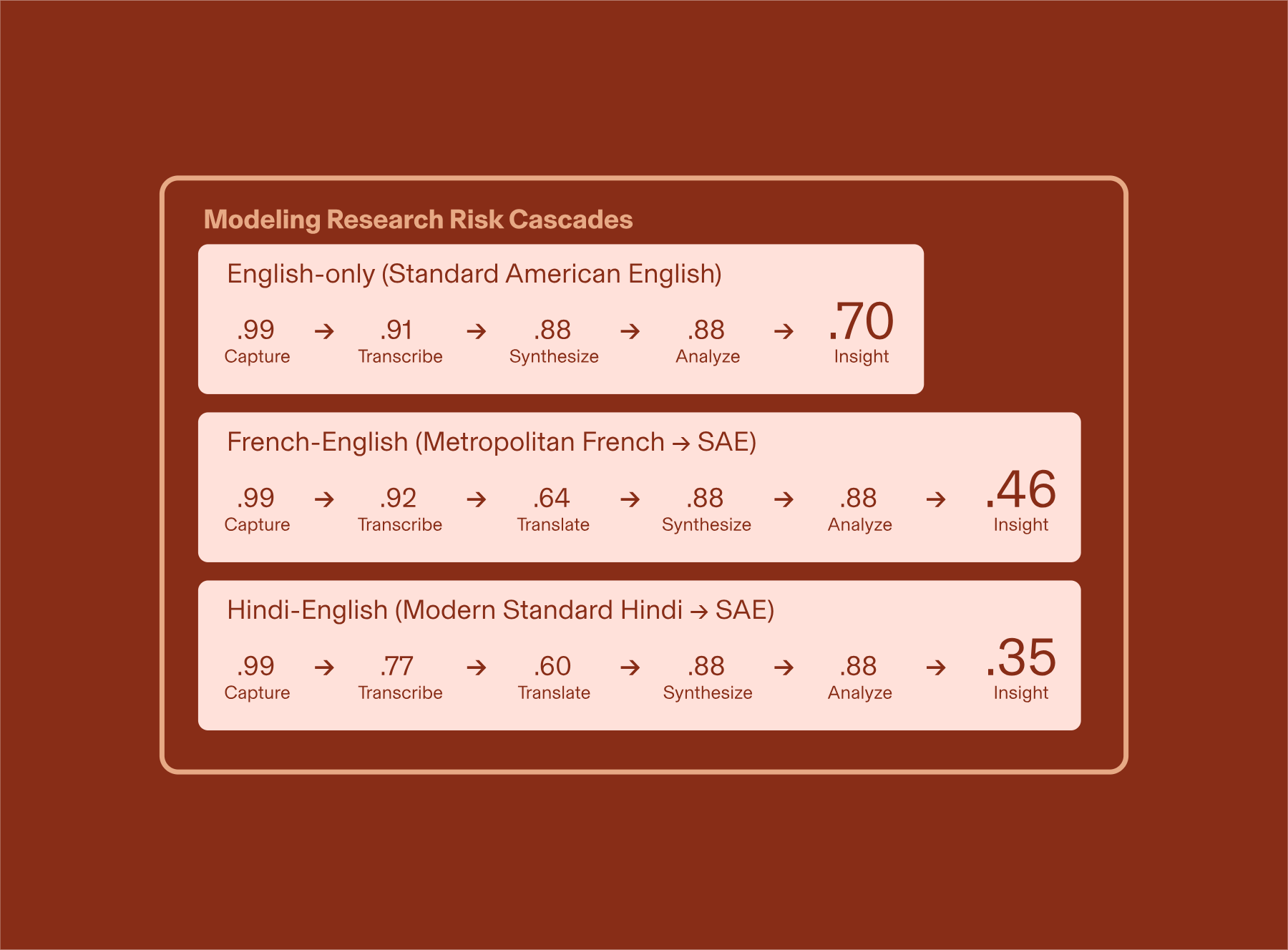

Lindsey DeWitt Prat (Bold Insight) has demonstrated the compounding negative effects of automation across research workflows, developing the “research risk cascades” framework to help teams navigate these challenges.

This model illustrates the compounding risk introduced by automation at each stage of the research pipeline. In English-only research, accuracy declines from .91 after transcription to .70 by the time insight is produced. The drop is significantly steeper in multilingual workflows: French-English accuracy falls from .92 to .46, while Hindi-English declines from .77 to .35 by the insight stage.

See Establish quality guardrails below for more information on how to guard against these challenges.

Delegate predictable and administrative work. If a task follows a clear blueprint (eg. drafting, formatting, sorting), automate it to redirect time to more high-impact tasks.

Examples leaders shared:

Use AI as a "first pass" to get up to speed (rapid scanning, fact-finding and orienting yourself) but never treat it as the final source of truth.

Examples leaders shared:

Use AI to polish the medium, not the message. Deploy tools to smooth tone, translate languages and tailor outputs for specific audiences without altering the core insight.

Examples leaders shared:

“The lack of guardrails is scary. Different stakeholders are just coming out and working with these [tools directly]... and kind of skip us out of the process”

– Member of AI Research Council at Global Telecom

High-quality insight still starts with raw evidence—not with the layers of summarisation that AI produces on top of it. This principle becomes even more important as PMs and designers start pulling information directly from repositories and LLM-based knowledge systems.

Tools like NotebookLM show how AI can strengthen rigour, but only when insights maintain their traceability. They help PMs see where a claim came from rather than assuming it’s correct. However, relying on primary data alone forgoes the interpretive skill of the experienced researcher. As Lindsey DeWitt Prat (Bold Insight) cautions, “even with a multimodal model, the AI can pick up on words, but it can’t pick up on expressions…it misses context and any sort of multi-dimensionality and nuance."

Her point underscores a central risk: while AI can surface linguistic patterns, it cannot perceive the texture, emotion or subtle contextual cues that often carry the real meaning. Unless they are educated by the research team, teams will over-trust linguistic patterns and under-value human observation—the exact conditions where false confidence spreads.

AI adds the most value when it tests human thinking, not when it attempts to replace it. Sam Ladner (Principal Researcher) described how she uses AI at the verification stage of qualitative analysis to pressure-test hypotheses to examine whether proposed conclusions are accurate, and under what “exceptions” In this way, AI tools become a means to strengthen robustness without ceding interpretive authority.

Soojin Jeong (Google DeepMind) echoed this stance, stating “you shouldn't trust AI’s result as it is. You’ve got to have your perspective and make sure it's the right information within the context you’re seeking [to understand].” AI earns its place in the workflow when it augments scrutiny without ever displacing the contextual judgement only researchers can provide. When AI is positioned as a second lens, teams learn to ask: “How do I know this is true?”rather than “What does the model say?”

To ensure accountability, leaders emphasised the need to keep a skilled human firmly in the loop. As one research director put it, the human must be “experienced in that space enough to kind of know the ins and outs and to be critical of the findings.” Even confident-sounding models can fabricate citations, invent evidence or overstate conclusions; only a knowledgeable researcher can detect when something is fundamentally off.

He went on to illustrate this vividly: “I said to [the AI model], that’s a very interesting take that you have. Where do you get that? It elaborated a little more. And I said, okay, but where is the data from this type of person you're telling me about? [The model said] it's on page 39 in the bar graph. And I'm like, there is no bar graph on that page.”

Researchers can train teams to spot errors and maintain vigilance, especially in workflows like product strategy or user needs where fabricated detail leads to costly decisions.

AI use and model transparency is also becoming a central pillar of responsible practice. Naming the specific models used (eg. “I’m using Claude Sonnet 4.5 for this analysis”, Lindsey DeWitt Prat, Director, Bold Insight) and explaining how AI supported the work helps to counter perceptions that quality is compromised or that researchers are “cheating” by hiding their use of automation. For Dave Snowden forbidding abstraction of ‘The AI’ also critically strips models of any anthropomorphism that obscures the reality that models are simply imperfect tools.

Leaders stressed that openness to disclose AI use builds trust, clarifies the division of labour between humans and machines, normalising responsible experimentation across the organisation.

A visual mock up of a document titled “Interview analysis.” The document is structured into multiple numbered sections of synthesised text. At the bottom, a notification indicates which AI tools were used to support compilation and synthesis. In this example, the note reads: “This analysis was synthesised with support from Claude Opus 4.5.”

LLMs can generate answers quickly, but they can also generate convincing errors. This produces an added demand of validation labour within the research process, where researchers must systematically check outputs to maintain ownership and proximity to the data. As Judd Antin (Consultant and Academic) explained, “It’s extremely easy to find examples that validate your point of view, but basically impossible for [AI] to falsify them because falsification requires the idea of a pre-existing construction.”

Dan Busso (Meta) echoed the consequences: “I still feel like I need to go in and double-check everything…I’ve definitely had the experience of things coming out of the model that are not accurate or misrepresent what was actually being said.” Maintaining quality at the source depends on a heightened vigilance toward LLM outputs ensuring that insights and themes are tethered to the primary data.

Use AI to critique your thinking, but never to create it. The human defines the "what" and the "why", while the AI only supports the "how".

Examples leaders shared:

Mandate "source tracing" for all AI claims. If the AI cannot point to specific evidence (a timestamp, a quote a raw data point), the insight must be discarded.

Examples leaders shared:

Build mandatory "human-in-the-loop" checkpoints to prevent small errors from snowballing. Manually sample data at critical hand-offs.

Examples leaders shared:

“The greatest insights do not come from hearing 10 of 12 people agree on a topic, but hearing those two people say something different than the other 10.”

– Emanuel Moss, Senior Research Scientist at Intel Labs

Expert interpretation is ultimately what produces good, non-obvious research. Researchers carry an embodied understanding of users—their contexts, constraints, emotions and lived realities. This interpretive grounding is not optional; it is the heart of the work.

As a Senior Staff Research Manager, Consumer Tech put it, “a large value that we provide is talking about or discovering or observing things that are unsaid—what people don’t say and what they do not mean when they say something, or vice versa, what people mean when they do not say something.” This is the subtle, subjective terrain where qualitative insight lives, and where human judgement remains irreplaceable.

Lindsey DeWitt Prat (Bold Insight) offered a similar reminder: “AI is not going to sit in an interview with women in rural Nigeria and be able to tell me the underlying cultural, linguistic, financial, political and social context based on the transcripts alone.” A model can process words on a page, but it cannot feel the atmosphere of the room, sense the stakes of silence or map the multi-dimensional forces shaping a person’s response. Contextual, embodied and interpretive insight is human work. And it’s what keeps researchers essential in an AI-enabled future.

Lotta McAlpine (Spotify) echoed this reality: “I’m quite excited that we can use AI to speed things up… but we are the voice of the user, and the user is not an AI.” Researchers, she argued, hold the responsibility of representing real people, not machine-generated abstractions.

A visual mock-up of an interview transcript document. The interface shows a feature labeled “Add contextual note,”allowing the researcher to add context to the transcript. In this example, the researcher enters the note: “This claim contradicts revealed behavior observed earlier in the interview.”

As AI accelerates operational tasks it frees researchers up to spend time sensing organisational dynamics. A key to this is fostering better and deeper relationships with stakeholders, which gets researchers in the room so they can get a feel for what’s really going on. Judd Antin (Consultant and Academic) noted that “the researcher’s job is actually more relational than it's ever been”. Understanding organisational context, product intuition, and a feel for how decisions are made is now more critical than ever. Katie Johnson (Panasonic Well) noted the researcher’s task today is to "triangulate our own hunches with stakeholder dynamics, and the political, business and commercial context”.

AI can empower researchers to think beyond insights and become more opinionated. As Judd Antin (Consultant and Academic) put it, “I hope “how might we’s” die in a fire! They are one of the worst things on the planet”. AI can help researchers go beyond simple recommendations, to deliver tangible artefacts that show rather than tell. As Soojin (Google DeepMind) explained it’s “showing something…inspiring or bringing some engaging insight artefact to people”. AI can help prototype these recommendations, as long as they reflect the underlying human creativity, empathy, and craft of the researcher.

Lindsey DeWitt Prat (Bold Insight) explained that, if allowed by the client, her team would first generate their ideas through deep, manual inquiry, and only then use “a mixture of models” to validate them, check for gaps or identify inaccuracies. Taking a layered approach where LLMs are deployed “as a judge” not an insight generator, strengthens teams’ collective confidence in the findings.

Deep qualitative research is inherently “wasteful” in producing a surplus of insights that fall outside the remit of project objectives and deliverables. Yet, what’s left out often leaves clues for the edge of what to research next. One research lead at a large tech company noted that efforts to launch internal initiatives that aggregate these insights have been useful, but are also costly in team time and organisation. AI is uniquely capable of reclaiming what’s left on the cutting room floor of analysis to turn these insights into novel questions that lead researchers to the edge of emerging strategic priorities. However, for researchers to utilise the “raw materials” of long-tail insights using AI, LLMs need to ingest them in highly structured formats (see: distribute established knowledge).

When LLMs are deployed uncritically, they become vending machines of insight: tools that dispense plausible outputs that can lure researchers into accepting quick, sensible-sounding answers. Katie Johnson (Panasonic Well) noted the temptation to take AI outputs as a signal that "[people don't] have to do anything anymore [and] don't own the responsibility of having to know what's going on." AI summarisation, in turn, creates a “massive engine for confirmation bias” according to Emanuel Moss (Intel Labs), reinforcing the narratives researchers already expect to see. When researchers cede their authority, they “forego the chance to maintain that sort of outlier-like, needle-in-the-haystack mentality” essential for staying at the edge of insights.

While LLMs enhance clarity, they can also undermine researchers’ confidence in sharing insights or challenging assumptions. As Katie (Panasonic Well) observed, “LLMs and AI specifically has eroded human confidence, which in turn has eroded productive debate without us really even noticing that we gave it up… you feel like you're not just disagreeing with a colleague, you're disagreeing with them plus OpenAI.”

When everyone relies on the same distilled summaries, discussions feel predetermined and dissent becomes harder to voice. To fully realise the benefits of augmented communication, organisations must maintain deliberate practices that protect debate and encourage independent judgement. It is critical the researcher stays in direct contact with “the edge” as this is essential to justifying their value and legitimacy.

Prioritise in-person observation to capture the non-linguistic physical, emotional and cultural nuance models cannot see. Create structured methods for capturing this context rigorously.

Examples leaders shared:

Focus analysis on outliers and anomalies rather than the median patterns AI defaults to. Mine "surplus data" for early indicators of change.

Examples leaders shared:

Move beyond abstract "how might we" statements. Use AI to translate insight into artefacts, mock-ups and visuals that make recommendations tangible and testable.

Examples leaders shared:

“Now you could get a better sense of 45 documents instead of the two reports that you directly collected the data for. It's almost like a layer of organisational intelligence served at an individual level.”

– Research director at large tech company

LLM-driven knowledge management systems have already shifted how research teams can track demand across their organisation. A Fin-tech VP described this shift: he can “literally go in and tell you that [our] platform has seen 1,500 users this month who have produced 4,500 search topics and looked at 1,100 individual artefacts…and say, this piece of work that we did that cost $200,000 [has] been used 852 times. So when I look at the justification of cost and time, I have solid answers for that.” As leaders face tighter budget scrutiny and ROI expectations, this kind of insight provides rare, objective evidence of organisational impact. But the limits are equally clear.

These systems can help retrieve information quickly, but we should be cautious of relying on them for interpretation. As Judd Antin (Consultant and Academic) observed, “we now have tools, but they won’t tell us what the data means or what we should do.”

As organisations scale LLM-driven knowledge systems, the quality of what gets ingested becomes a critical determinant of trust. One research director at a large tech company emphasised that researchers must “guarantee that there are the highest quality sources in a notebook and they’re coming from vetted research” when building these systems. Poor inputs don’t just stay contained—they get amplified. As a Fin-tech VP warned, if the underlying material isn’t sound, “you produce crap on top of crap and…lose in the long run.”

Heli Rantavuo, Head of Customer Insight at OP Financial Group, suggested that researchers will increasingly take on the role of “data operators”, responsible for ensuring repositories remain trustworthy, well-structured and legible for both AI ingestion and human teams. LLM-driven knowledge systems only reach their full potential when artefacts are thoroughly annotated, enabling verification and increasing collective confidence in the integrity of the data.

A visual mock up of a spreadsheet titled “AI ready golden data set”. The table spans six columns labeled Clip ID, Research area, Activity / context, Participant, Pattern tags, and Design implications. Each column is populated with stand-in entries representing data that has been cross-checked for quality and trustworthiness, making it suitable for both AI ingestion and use by research teams.

Leaders also pointed to the risk of structural bias in the repository itself. Because LLMs reflect the distribution of what they ingest, imbalances quietly distort what appears to be “true”. As a Head of Insight at a Global CPG noted, if 95% of the research comes from the U.S. and only 5% from Germany, teams may mistakenly interpret an American pattern as a global one. “If you’re going to draw a global conclusion,” she explained, “you need to know if it’s 75% America, 25% rest of the world… the outputs are not going to represent your Thai supermarket then, are they?”

Data curation is therefore not some back-office task—it is the foundation that determines whether knowledge systems support insight or silently skew it.

Write reports with clear metadata, context and hierarchy so LLMs can reliably ingest and retrieve them. Label bias and population data explicitly.

Examples leaders shared:

Actively steward the repository. Assign roles to retire obsolete data and ensure only "high quality" work is indexed to prevent knowledge pollution.

Examples leaders shared:

Use retrieval analytics to track what teams are actually searching for. Align research priorities with real organisational pull rather than guesswork.

Examples leaders shared:

Three years into the LLM era, the mandate is no longer simply to “adopt AI,” but to adopt it wisely. This playbook outlines a clear path forward for 2026: a reset of expectations, roles, and responsibilities that preserves the craft of research while embracing the efficiencies AI can offer.

The emerging needs and orientations of research functions are clear:

Done well, AI amplifies research’s strategic value, giving teams the time and space to do the work only humans can: interpret reality, sense what’s changing and guide decisions that matter.

This is a guide for early 2026. Expect these best practices to evolve as new capabilities emerge and leaders continue to adapt.

AI tools we used:

Quallie.ai: transcription of interviews, quote retrieval, theme generation

NotebookLM: synthesis, quote retrieval, ideation of tactics and inspiration for the 2 x 2 Matrix

ChatGPT v5.1: light editing, ideation of tactics